No More Hustleporn: How does OpenAI plugins/Browser work? A thread of detailed analysis on the server interaction. S/o to @CrisGiardina for helping with access.

Tweet by Vaibhav Kumar

https://twitter.com/vaibhavk97

@vaibhavk97:

How does OpenAI plugins/Browser work? A thread of detailed analysis on the server interaction. S/o to

@CrisGiardina

for helping with access.

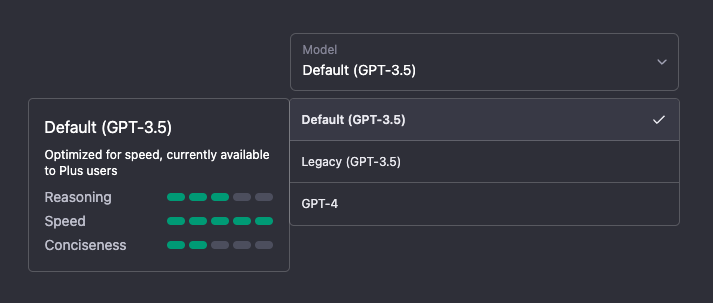

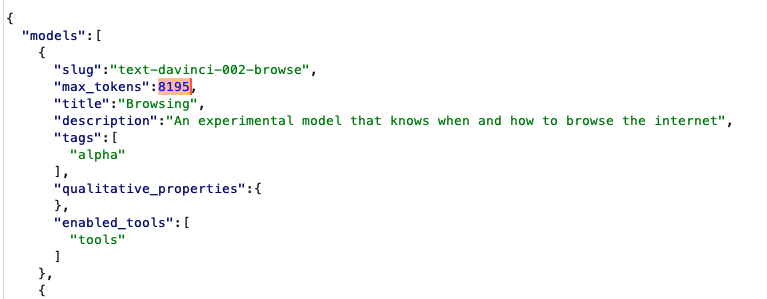

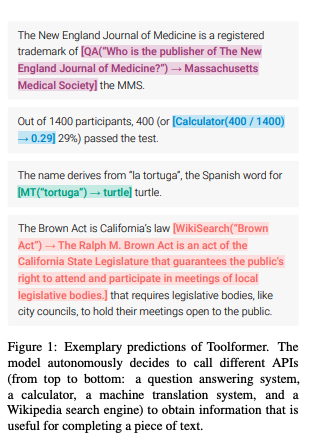

TLDR: Browsing (and possibly plugin) is a different model with 8k seq length support and a toolformer like operation structure.

@vaibhavk97:

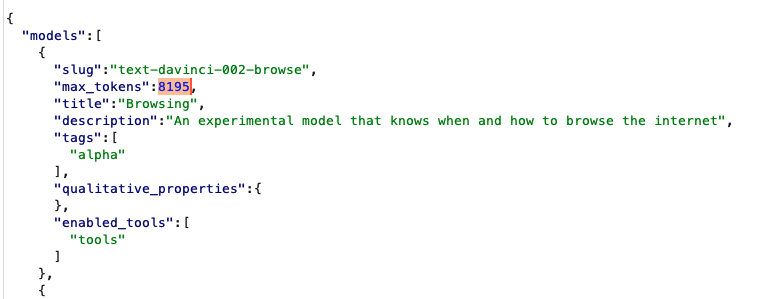

If you look into the model names, this one is called text-davinci-002-browse and supports 8197 max_tokens.

So those of you who have not got access to GPT-4 8k API but have got access to plugins, you can have a similar experience with longer context.

@vaibhavk97:

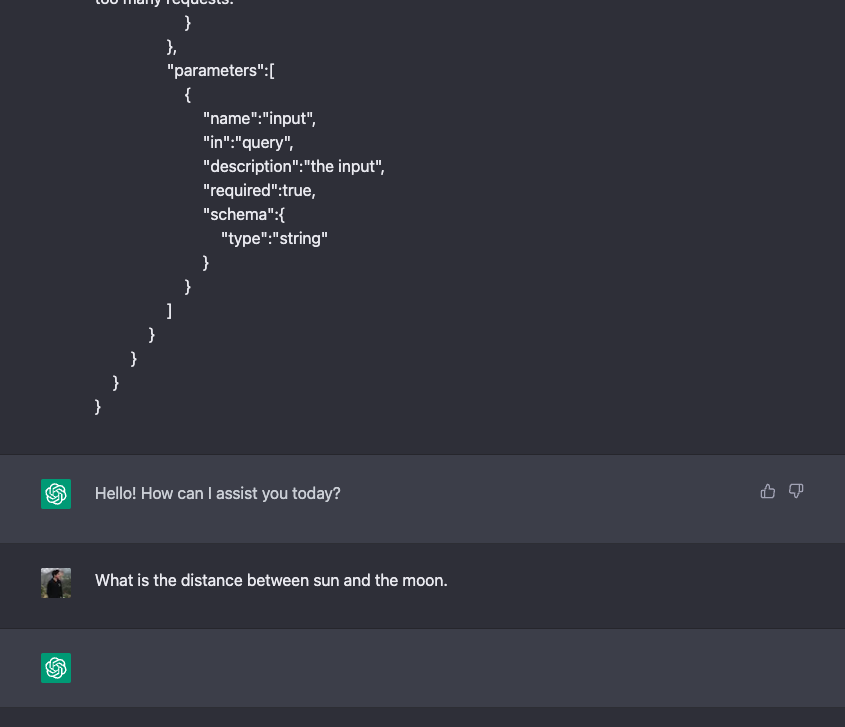

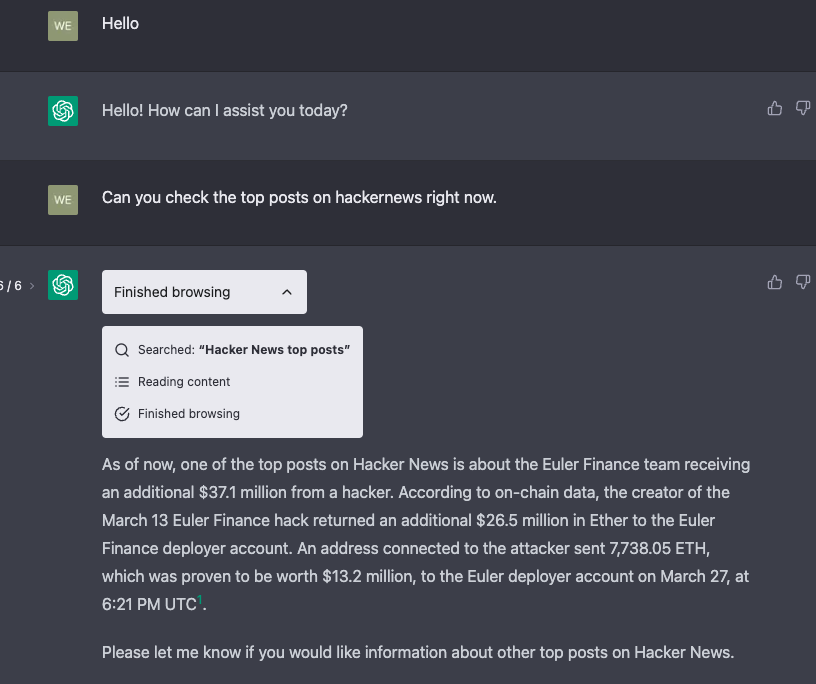

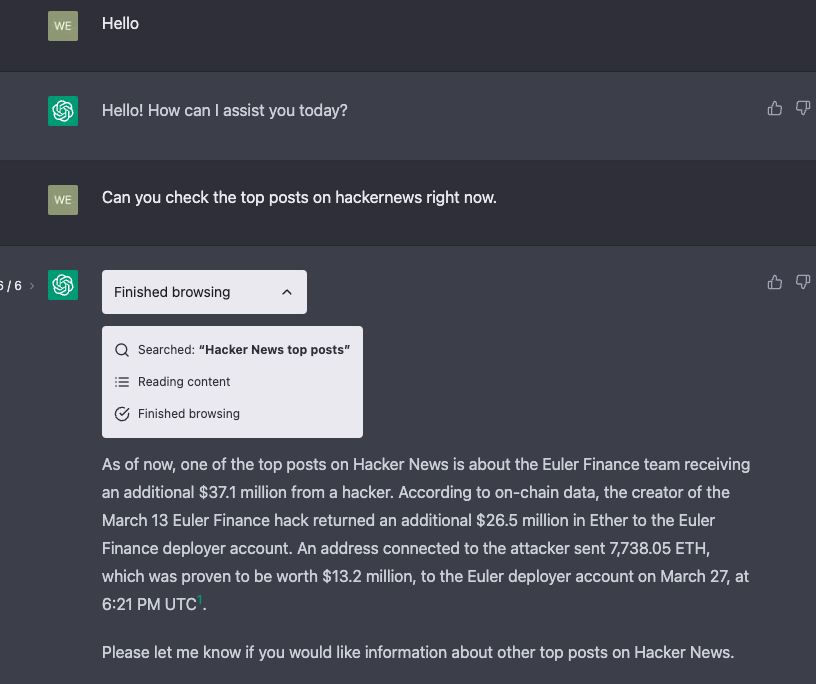

The process of querying is simple, you just chat like any other ChatGPT session but the model is smart enough to make web-requests, but how? First lets look at the client side view of the chat session:

@vaibhavk97:

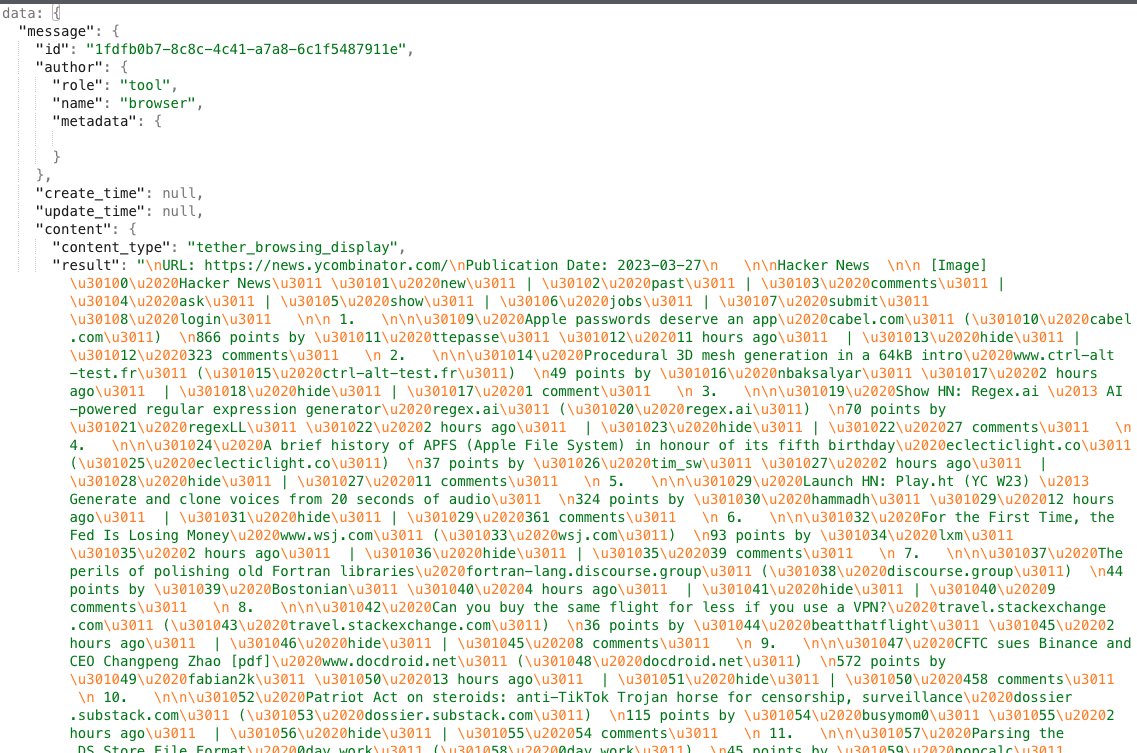

Now, lets take a look at the backend-chat logs. There are three actors in the conversation now:

1. User: The person chatting.

2. Assistant: GPT model

3. Browser: The tool which is used to get information.

The first part of the chat (after user prompt) is as follows:

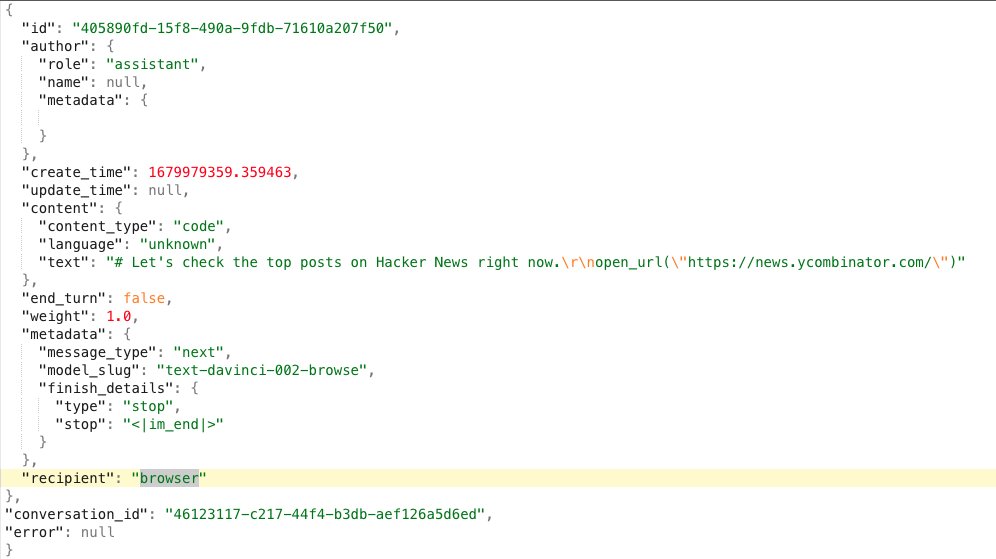

@vaibhavk97:

Notice the text field, this is not prompted by the user but generated by model as a prompt for the tool (browser here).

Notice the recipient field, usually its set to all but in this case it is set to "browser", and thus this message is used internally and not shown.

@vaibhavk97:

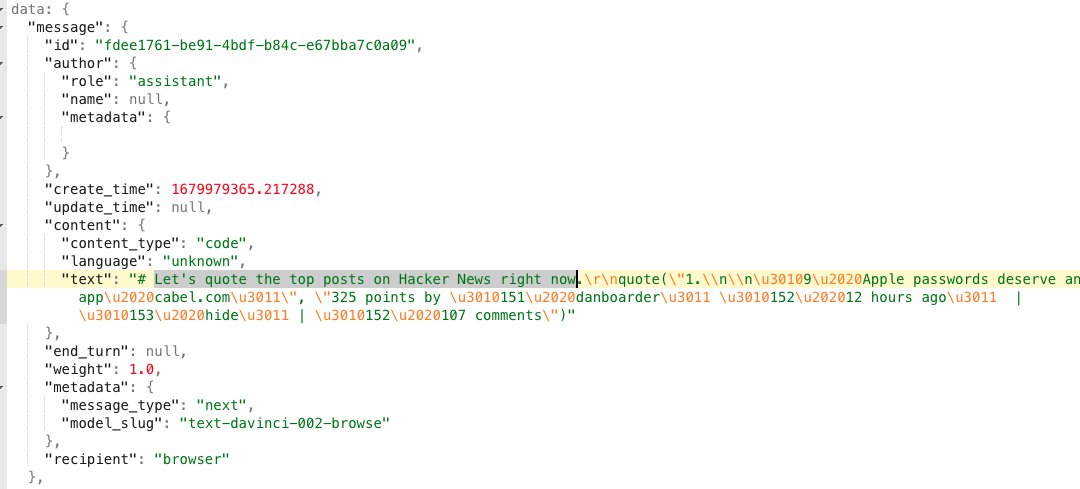

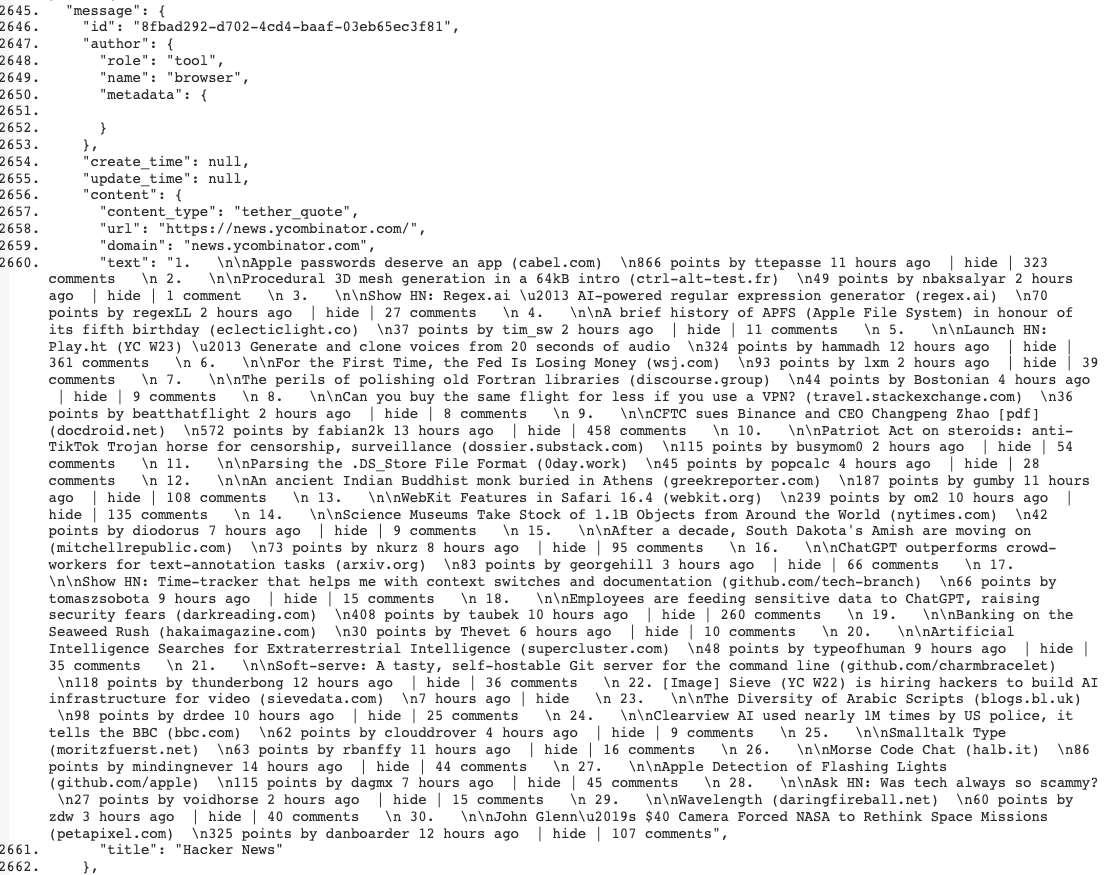

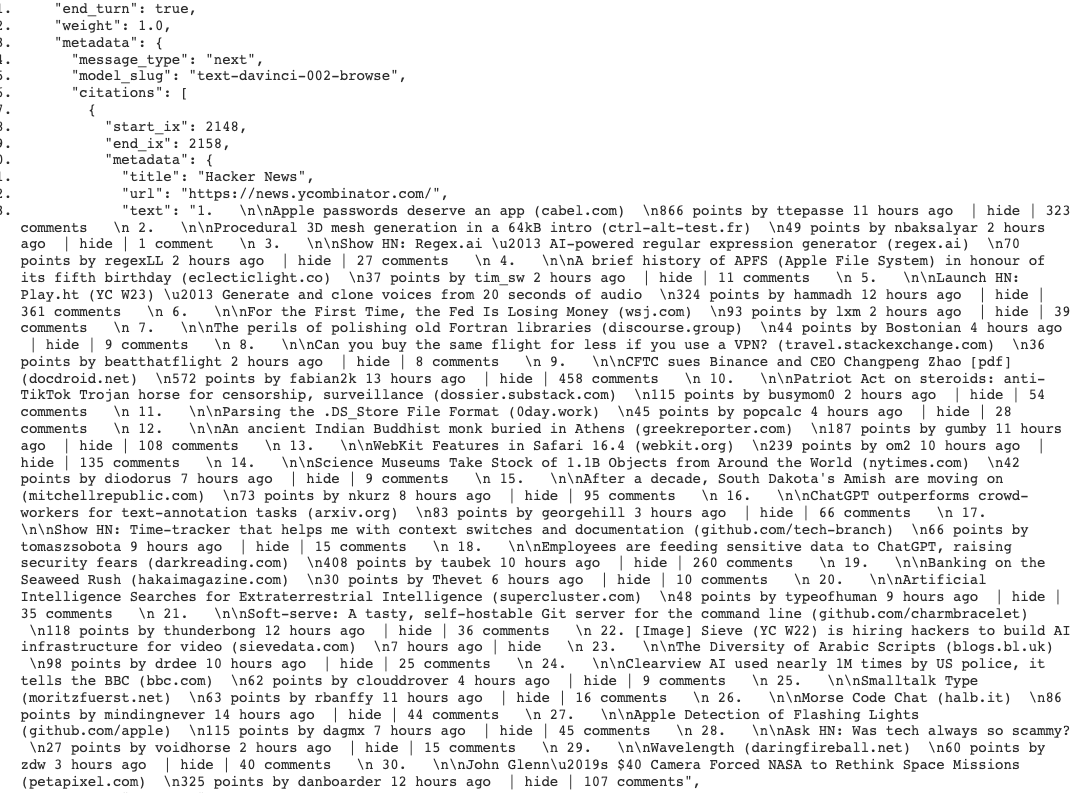

In the second part of the request, browser response is included, notice the role as tool, name as browser and the content_type. This appears to be an output from a web-browser that is sanitized for input to the LM along with some metadata for citations.

@vaibhavk97:

What follows is a series of calls to the browser to provide quotations based on the web results, possibly to reduce hallucination and provide better grounding. (can we assume browser is more than browser?)

@vaibhavk97:

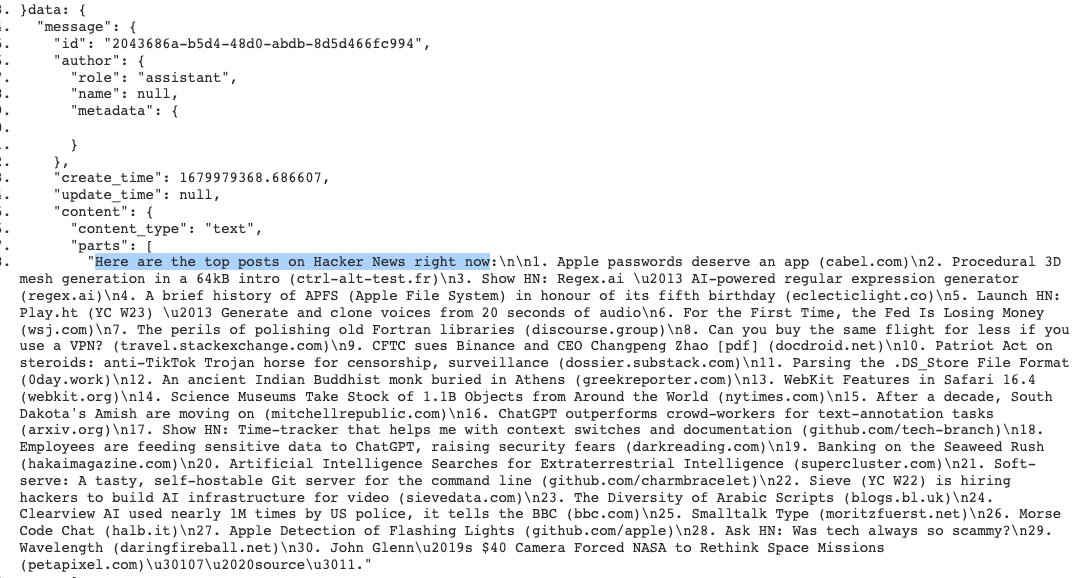

Once these two parts are over, the model starts generating its final response that would be shown to the user.

@vaibhavk97:

Its a long and slow process with the total conversation size for a single question chat being in excess of 512kb.

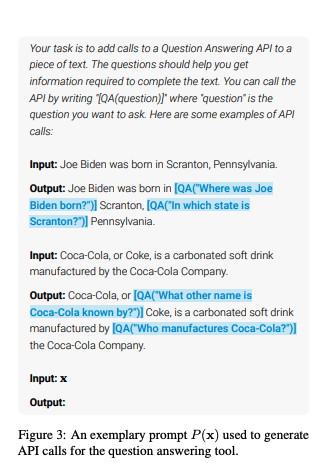

The given "browser inputs" and message author changes indicate that there is a toolformer like architecture where the relevant input is provided.

@vaibhavk97:

I noticed these fields in the API back in December. This just means that OpenAI has been sitting on the model and related developments from a long time, much before the toolformer paper even came out.

mobile.twitter.com/vaibhavk97/sta…

@vaibhavk97:

It can support multiple types of input according to API:

1. text

2. code

3. tether_browsing_code

4. tether_browsing_display

5. tether_quote

6. error

7. stdout

8. stderr

9. image

10. execution_output

11. masked_code

12. masked_text

13. unit_test_result

14. system_error

@vaibhavk97:

The system prompt for browser can be found here:

mobile.twitter.com/CrisGiardina/s…

@CrisGiardina:

[ChatGPT + Browsing Plugin]

Let's extract the initial prompt to figure out how the "browser" tool works, and then we can define our own commands to perform complex tasks with a single prompt. 👇

@vaibhavk97:

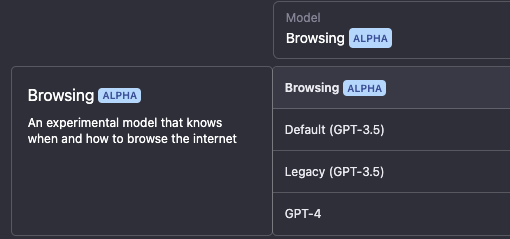

Here is one easter egg if you made it this far. Use the following prompt

pastebin.com/9PiUiNdS

in your Default(GPT-3.5), and after a greeting message if you ask a tool-related question you will not get a response! The model supports tools but they are not enabled.